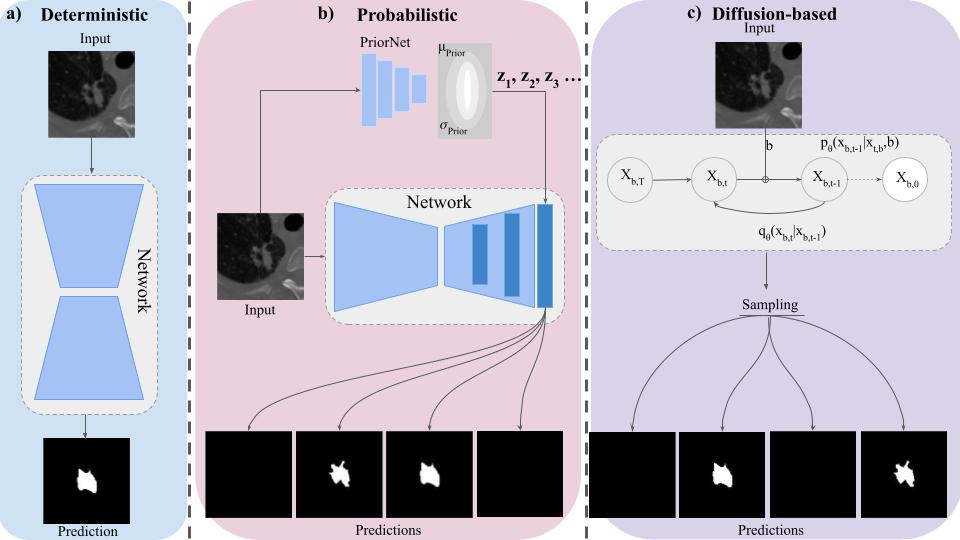

a) Deterministic networks produce a single output for an input image. b) c-VAE-based methods encode prior information about the input image in a separate network and sample latent variables from there and inject it into the deterministic segmentation network to produce stochastic segmentation masks. c) In our method the diffusion model learns the latent structure of the segmentation as well as the ambiguity of the dataset by modeling the way input images are diffused through the latent space. Hence our method does not need an additional prior encoder to provide latent variables for multiple plausible annotations.